|

Get the email that makes keeping up with AI easy and fun. This is the file we will use to run the model. This produces models/7B/ggml-model-q4_0.bin - a 3.9GB file. The second script "quantizes the model to 4-bits". This should produce models/7B/ggml-model-f16.bin - another 13GB file. Compared to other similar programs, this one is easier to use and navigate. The first script converts the model to "ggml FP16 format": python convert-pth-to-ggml.py models/7B/ 1 With qBittorrent, you can download, upload, and even create torrents. Next, install the dependencies needed by the Python conversion script. You need to create a models/ folder in your llama.cpp directory that directly contains the 7B (or other models) and folders from the LLaMA model download. In the models folder in llama.cpp, you should have the following file structure: We're only going to be downloading the 7B model in this tutorial:

Open your Terminal and enter these commands one by one: git clone Here's how to set up LLaMA on a Mac with Apple Silicon chip. A troll attempted to add the torrent link to Meta’s official LLaMA Github repo.

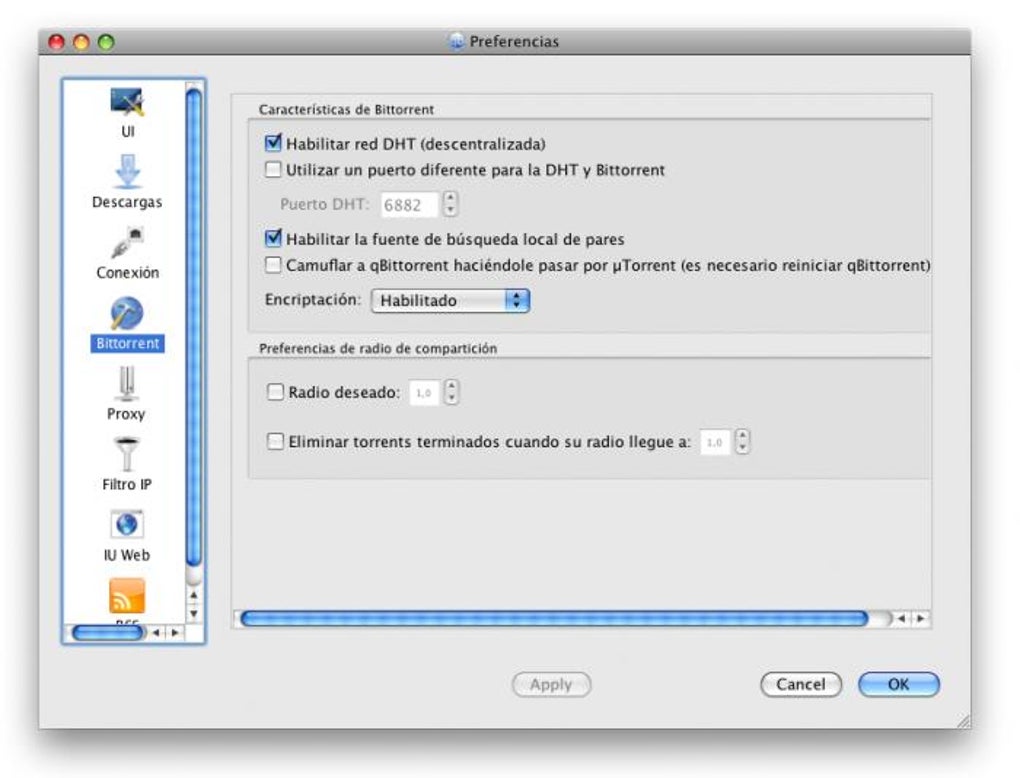

RSS feed support with advanced download filters (incl.On March 3rd, user ‘llamanon’ leaked Meta’s LLaMA model on 4chan’s technology board /g/, enabling anybody to torrent it. Category-specific search requests (e.g. Simultaneous search in many Torrent search sites Well-integrated and extensible Search Engine:

Polished User Interface for a smooth transition The primary purpose of qBittorrent client is to offer an alternative to other similar torrent managers. qBittorrent aims to meet the needs of most users while using as little CPU and memory as possible. QBittorrent is an advanced and multi-platform BitTorrent client with a nice Qt4 user interface as well as a Web UI for remote control and an integrated search engine.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed